Strengthening Application Security with AI-Powered Vulnerability Scanning

The Evolution of Static Analysis and Automated Penetration Testing

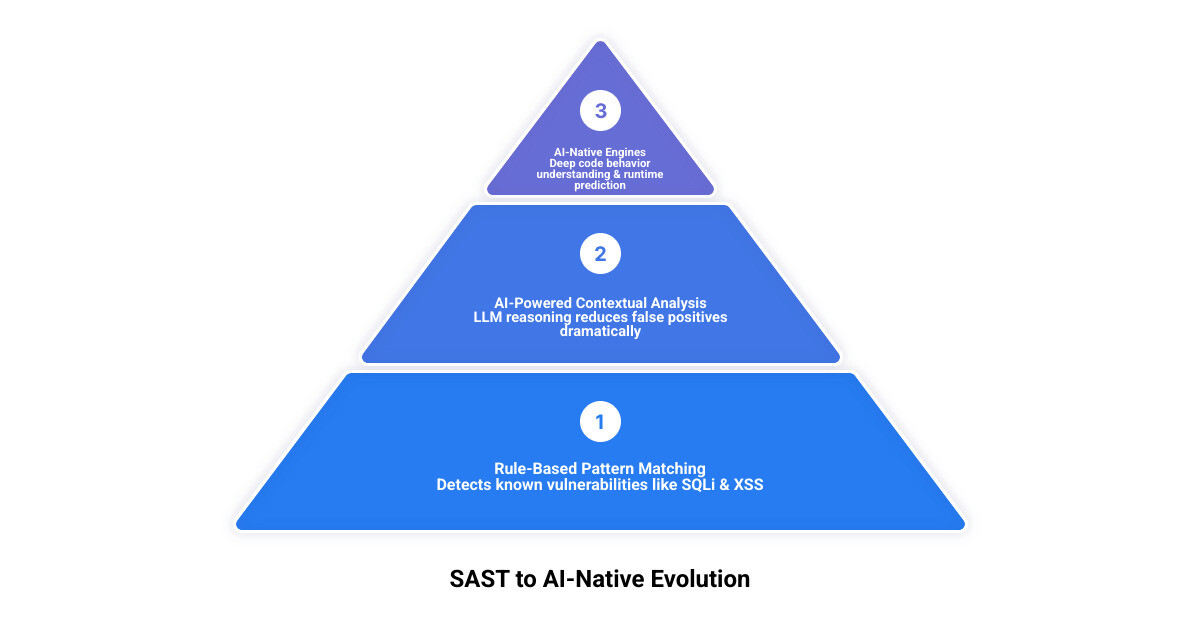

Traditional Static Application Security Testing (SAST) has long been a foundational pillar of secure software development. By analyzing source code, bytecode, or binaries without executing the application, SAST tools identify potential vulnerabilities early in the development lifecycle. This “shift-left” approach is crucial for catching security flaws when they are less costly and complex to fix. Historically, SAST relied on predefined rules and patterns to flag common issues such as SQL injection and cross-site scripting. While effective against known vulnerabilities, this approach often struggles with context-dependent flaws, leading to a high volume of false positives and a significant burden on security teams.

The advent of Artificial Intelligence (AI) is fundamentally reshaping this landscape. We are moving beyond rigid, deterministic rules towards more intelligent, adaptive analysis. Modern AI-powered SAST tools leverage advanced techniques, including Large Language Models (LLMs), to better understand code behavior and context. This allows for more precise vulnerability detection and a dramatic reduction in the noise that has plagued traditional SAST. The integration of AI transforms static analysis from a simple pattern-matching exercise into a sophisticated reasoning process, capable of interpreting the nuances of code logic. For a deeper dive into how these intelligent systems are being built, exploring resources on automated AI SAST analysis can provide valuable insights into their underlying mechanisms.

The shift from traditional, rule-based SAST to AI-native engines represents a significant leap in our ability to secure applications.

Bridging the Gap Between SAST and Automated Penetration Testing

While SAST excels at identifying vulnerabilities within the code itself, it inherently operates without understanding the application’s runtime behavior or its interaction with external systems. This is where Dynamic Application Security Testing (DAST) and other automated penetration testing tools come into play, evaluating the application from an attacker’s perspective while it’s running. The challenge has always been bridging the insights from static analysis with the real-world context of dynamic testing to form a comprehensive security posture.

AI is proving instrumental in closing this gap. By enhancing SAST’s contextual understanding, AI can help predict how static vulnerabilities might manifest at runtime. For instance, an AI-enhanced SAST tool can not only identify a potential SQL injection point but also, by understanding data flow and architectural context, assess its likelihood of being exploited in a live environment. This richer understanding enables security teams to prioritize findings more effectively, focusing on those that pose the greatest risk to the application’s attack surface. Furthermore, AI can help correlate findings from both SAST and DAST, providing a more holistic view of an application’s security, informing more targeted automated penetration tests, and contributing to a more robust vulnerability management strategy.

Future Trends in Automated Penetration Testing and Agentic Security

Looking ahead to 2026 and beyond, the evolution of AI in application security points towards increasingly sophisticated and autonomous systems. One of the most exciting trends is the rise of “agentic” security. This concept involves AI agents that can not only identify vulnerabilities but also plan, execute, and validate remediation steps, moving towards truly self-healing code. These agentic systems could revolutionize how we handle security incidents, enabling autonomous triage and remediation that significantly reduces human intervention.

We anticipate deeper integrations into Integrated Development Environments (IDEs) and CI/CD pipelines, making security feedback even more immediate and actionable. Imagine an AI agent that detects a vulnerability, proposes a fix, tests it, and even creates a merge-ready pull request, all within minutes of a developer writing the code. This level of automation promises to drastically cut down remediation time and developer friction. The future of automated penetration testing will likely see AI playing a central role in orchestrating complex security workflows, from proactive vulnerability detection to intelligent, agentic remediation, ensuring that security keeps pace with the accelerated rhythm of modern software development.

Technical Distinctions: AI-Powered vs. AI-Native Security Tools

The terms “AI-powered” and “AI-native” are often used interchangeably in the security industry, but they represent distinct architectural approaches to integrating artificial intelligence into SAST. Understanding this difference is crucial to evaluating a security tool’s true capabilities.

Feature AI-Powered Traditional SAST AI-Native SAST AI Role Augments existing traditional SAST engines (post-analysis) Core component of the detection engine (during analysis) Detection MechanismRule-based, pattern matching, data flow analysis (traditional) LLM-driven contextual reasoning, code behavior understanding, deep analysis Contextual UnderstandingLimited to predefined rules; AI adds context post-scan Deep, project-level understanding of architectural context and business logic False Positive Handling AI helps triage, prioritize, and explain findings after initial scan AI is integrated into detection to inherently reduce noise and false positives Vulnerability Types Excels at known patterns; AI helps interpret findings Better at complex logic flaws, authentication issues, and novel vulnerabilities Architectural Basis Layers AI on legacy SAST engines Built from the ground up with AI/LLMs as the primary analysis engine AI-powered SAST typically refers to tools that enhance their existing, traditional SAST engines with AI capabilities. The core detection mechanism still largely relies on established static analysis techniques, such as building abstract syntax trees (ASTs) and data flow graphs to trace data movement and identify predefined patterns. AI is then applied after this initial scan to improve the output.

In contrast, AI-native SAST tools are designed with AI at their core, integrating LLMs and advanced machine learning techniques directly into the detection process. This approach allows for a much deeper contextual understanding of the code, moving beyond simple pattern recognition to reason about code behavior and intent. This fundamental difference impacts everything from the types of vulnerabilities detected to the signal-to-noise ratio of the findings.

Core Capabilities of AI-Powered Traditional SAST

AI-powered traditional SAST tools primarily leverage AI to improve the post-scanexperience. After the core SAST engine completes its analysis, AI is employed to:

- Triage and Prioritize: AI algorithms can analyze raw findings from the SAST engine and correlate them with contextual information (e.g., code ownership, exploitability, business impact) to prioritize the most critical vulnerabilities. This helps security teams focus their efforts on high-risk issues rather than sifting through a “wall of alerts.”

- Explain Findings: AI can generate natural-language explanations of detected vulnerabilities, making them easier for developers to understand. This includes describing the vulnerability, its potential impact, and providing initial remediation guidance.

- Suggest Remediation: Based on the identified vulnerability and code context, AI can suggest code fixes or remediation steps. These suggestions often draw from vast datasets of secure coding practices and past fixes.

While these enhancements significantly improve the usability and efficiency of traditional SAST, the underlying detection capabilities remain largely dependent on the legacy rule engines. The AI acts as a sophisticated assistant, making the output of traditional SAST more digestible and actionable.

The Rise of AI-Native Contextual Reasoning

AI-native SAST represents a paradigm shift, where AI, particularly LLMs, is integrated directly into the core detection process. Instead of merely augmenting existing engines, these tools are built from the ground up to leverage AI’s capabilities for deep contextual reasoning.

Key characteristics of AI-native SAST include:

- Ground-up LLM Integration: LLMs are used to understand the semantic meaning and intent of the code, not just its syntactic structure. This allows the tool to “reason” about code paths, data flows, and potential interactions in ways traditional SAST cannot.

- Architectural Context Awareness: AI-native tools can comprehend the broader architectural context of an application, understanding how different components interact. This is crucial for identifying vulnerabilities that span multiple files or modules, which are often missed by traditional, localized scans.

- Business Logic Understanding: One of the most significant advantages is the ability to detect business logic flaws. These are vulnerabilities that arise from faulty application design or implementation, rather than common coding errors. Traditional SAST struggles here because these flaws don’t conform to simple patterns. AI, by understanding the intended logic, can identify deviations that lead to security issues.

- Deep Reasoning and Lower False Positives: By integrating AI into the detection phase, these tools can perform more sophisticated analysis, resulting in a much lower false-positive rate. They can differentiate between theoretical vulnerabilities and those that are genuinely exploitable within the application’s specific context, significantly improving the signal-to-noise ratio.

This approach offers a more intelligent and accurate way to find vulnerabilities, especially those complex flaws that have historically required manual security reviews.

Enhancing Detection and Remediation of Complex Vulnerabilities

AI-powered SAST tools are becoming increasingly adept at identifying a broader range of vulnerabilities, moving beyond low-hanging fruit to tackle more complex and elusive flaws. While traditional SAST has always been strong at detecting common issues like SQL injection and cross-site scripting (XSS) due to their recognizable patterns, AI brings a new level of precision and depth.

For instance, AI-driven analysis can better trace tainted data through an application, identifying SQL injection vulnerabilities even when input sanitization is performed in a non-standard way or across multiple functions. Similarly, for XSS, AI can understand how user-controlled input might eventually render in a browser context, even after several transformations. Buffer overflows, particularly in languages like C/C++, benefit from AI’s ability to analyze memory access patterns and pointer arithmetic with greater nuance than purely rule-based systems.

However, where AI truly shines is in its capacity to detect business logic flaws and authentication issues. These are vulnerabilities stemming from the application’s design or user interaction handling, rather than simple coding errors. For example, an AI-native tool might identify a scenario in which a user can bypass a payment step by manipulating the order of operations, or an authentication mechanism can be circumvented due to a subtle logical flaw.

AI-powered SAST tools are proficient at detecting a broad range of Common Weakness Enumerations (CWEs), including but not limited to:

- CWE-89: Improper Neutralization of Special Elements used in an SQL Command (‘SQL Injection’)

- CWE-79: Improper Neutralization of Input During Web Page Generation (‘Cross-site Scripting’)

- CWE-119: Improper Restriction of Operations within the Bounds of a Memory Buffer (Buffer Overflow)

- CWE-287: Improper Authentication

- CWE-200: Exposure of Sensitive Information to an Unauthorized Actor

- CWE-327: Use of a Broken or Risky Cryptographic Algorithm

- CWE-611: Improper Restriction of XML External Entity Reference (‘XXE’)

- CWE-78: Improper Neutralization of Special Elements used in an OS Command (‘OS Command Injection’)

- CWE-352: Cross-Site Request Forgery (CSRF)

- CWE-434: Unrestricted Upload of File with Dangerous Type

Advancing Beyond Simple Pattern Matching in Automated Penetration Testing

The real power of AI in enhancing detection lies in its ability to go beyond simple pattern matching. Techniques like dataflow reachability analysis and source-to-sink tracing, when augmented by AI, become significantly more effective. Dataflow reachability enables tools to determine whether a vulnerable piece of code is actually reachable and exploitable by an attacker, reducing false positives from dead or unreachable code. One solution, for instance, claims to reduce false positives in high- and critical-dependency vulnerabilities by up to 98% through advanced dataflow reachability analysis. Similarly, AI-enhanced source-to-sink analysis can track the flow of untrusted data from its entry point (source) to a sensitive operation (sink) with greater accuracy, even through complex code transformations and inter-procedural calls.

This advanced analysis is crucial for uncovering complex logic flaws that might involve multiple functions, files, or even microservices. By understanding the full context and potential interactions, AI can pinpoint subtle vulnerabilities that traditional SAST, limited by its scope and rule sets, would typically miss. This leads to a much higher signal-to-noise ratio, with some AI-based triage engines reporting reductions in false positives of up to 95%. This means security teams receive findings that are more trustworthy and directly actionable, improving their efficiency and overall security posture.

The Impact of AI-Generated Code Fixes

Beyond detection, AI is making significant strides in the realm of remediation. AI-generated code fixes, also known as auto-remediation, are transforming the developer’s experience by providing immediate, context-aware suggestions for fixing identified vulnerabilities. These fixes can range from simple syntax adjustments to more complex logical changes.

Many AI SAST tools now integrate these auto-remediation features directly into developer workflows. For example, they can generate merge-ready pull requests (PRs) with proposed fixes, allowing developers to review, accept, or modify them with minimal disruption. The effectiveness of these fixes depends heavily on the AI model’s training and its ability to understand the specific code context. Crucially, these systems often incorporate fix validation mechanisms that automatically test the proposed changes to ensure they resolve the vulnerability without introducing new bugs or breaking existing functionality. This capability significantly reduces remediation time and developer friction, as developers spend less time manually researching and implementing fixes, and more time focusing on core development tasks. The goal is to make security a seamless part of the coding process, enabling developers to ship secure code faster.

Operationalizing AI Security within Developer Workflows

The true value of AI SAST tools is realized when they seamlessly integrate into the daily rhythm of development. Frictionless integration is key to developer adoption and ensuring that security becomes an intrinsic part of the software development lifecycle, rather than a bottleneck.

Modern AI SAST solutions prioritize integration across various touchpoints:

- IDE Plugins: Real-time feedback within the Integrated Development Environment (IDE) allows developers to catch and fix vulnerabilities as they write code. AI-powered suggestions can appear directly in the editor, providing immediate guidance and even auto-completing secure code patterns.

- CI/CD Pipelines: Integrating SAST into Continuous Integration/Continuous Delivery (CI/CD) pipelines ensures that every code commit or pull request (PR) is automatically scanned. AI enhances this by intelligently prioritizing findings and providing actionable feedback directly in the PR comments, streamlining the code review process.

- PR Feedback: AI can analyze code changes in a PR, identify new vulnerabilities or regressions, and provide concise, context-rich feedback to developers and reviewers. This helps maintain code quality and security standards before changes are merged.

- Jira Integration: Integrating with issue trackers like Jira automatically converts security findings into tickets, assigns them to relevant developers, and tracks them through to resolution, ensuring accountability and visibility.

By embedding security checks and remediation assistance directly into these familiar workflows, AI SAST tools reduce the “developer productivity tax” and foster a culture of secure coding.

When evaluating AI SAST tools during pilots, teams should focus on key metrics beyond just vulnerability counts:

- Scan Speed: How quickly does the tool analyze code, especially in CI/CD pipelines? (e.g., median CI scan time of 10 seconds for some tools).

- False Positive Rate: What percentage of reported vulnerabilities are not genuine? Lower rates indicate a higher signal and less wasted developer time.

- Fix Acceptance Rate: How often are AI-generated fixes accepted and merged by developers? A high rate indicates effective and trustworthy suggestions.

- Mean Time to Remediation (MTTR):How long does it take from vulnerability detection to its resolution? AI should significantly reduce this.

- Developer Feedback: Is the feedback clear, actionable, and integrated seamlessly into their workflow?

Data Privacy and Compliance in the AI Era

The rise of AI SAST introduces new considerations, particularly regarding data privacy and compliance. Many AI models, especially LLMs, are cloud-based and may involve sending proprietary source code to external services for analysis. This raises concerns about data residency, intellectual property, and compliance with regulations such as GDPR, SOC 2, or industry-specific standards.

Organizations must carefully evaluate how AI SAST tools handle their code:

- External Models: Understand if code is transmitted to third-party AI models and what data governance policies are in place. Are code snippets anonymized or obfuscated?

- On-premises Deployment: Some tools offer on-premises deployment or integration with an organization’s private LLM instances. This ensures that sensitive code never leaves the controlled environment.

- Bring-Your-Own-LLM: A few solutions support integrating with an organization’s self-hosted or privately managed LLMs, offering maximum control over data.

- Sandboxed Analysis: Some tools employ secure sandboxed environments for AI verification, ensuring that code remains isolated during analysis.

Choosing a tool that aligns with an organization’s data privacy requirements and compliance obligations is paramount, especially for those operating in highly regulated industries.

Strategic Selection for Automated Penetration Testing

Selecting the right AI SAST tool requires a strategic approach, considering an organization’s unique context:

- Team Size and Maturity Level:Smaller, agile teams might benefit from tools with quick setup, intuitive IDE integrations, and strong auto-remediation. Larger enterprises with established AppSec programs might need more customizable rules, robust reporting, and extensive language support.

- Tech Stack: Ensure the tool provides broad, deep language and framework coverage across all technologies in your codebase. Some tools support over 35 languages and 80 frameworks, while others specialize in a few.

- Specific Security Needs: What are the primary pain points? Is it an overwhelming number of false positives? Slow remediation cycles? Difficulty detecting business logic flaws? The chosen tool should directly address these challenges.

- Custom Rules: The ability to write custom rules is vital for addressing unique business logic, proprietary frameworks, or specific compliance requirements.

- Deployment Options: Consider whether a SaaS solution, on-premises deployment, or a hybrid model best fits your infrastructure and security policies.

- Scalability: The tool should efficiently handle large-scale enterprise codebases and complex monorepos, maintaining consistent performance as your codebase grows.

A thorough pilot program that measures the key metrics mentioned above is essential to validate a tool’s effectiveness in a real-world environment before full-scale adoption.

Frequently Asked Questions about Automated Penetration Testing

How does AI reduce false positives in security scanning?

AI significantly reduces false positives by leveraging contextual understanding, dataflow reachability analysis, and intelligent noise filtering. Instead of simply flagging code that matches a pattern, AI can reason about whether a vulnerability is actually exploitable within the application’s specific context. It can trace data flows more accurately, understand architectural nuances, and learn from past triage decisions to refine its detection. This leads to a much higher signal-to-noise ratio, with some solutions reporting reductions in false positives of up to 95%.

What is the difference between AI-powered and AI-native SAST?

The key difference lies in where AI is applied in the static analysis process. AI-powered SAST typically uses traditional SAST engines for core detection, then applies AI post-analysis to enhance triage, prioritization, explanation, and remediation suggestions. The AI augments the output of an existing, often rule-based, system. In contrast, AI-native SAST integrates AI, particularly LLMs, directly into its detection engine. It uses AI for core contextual reasoning, understanding code behavior, and identifying vulnerabilities from the ground up, leading to more accurate initial findings and inherently lower false positives.

Can AI SAST tools detect business logic vulnerabilities?

Yes, AI-native SAST tools are particularly well-suited to detecting business logic vulnerabilities, which have historically been a blind spot for traditional SAST tools. Because AI-native tools leverage LLMs for deep contextual understanding and project-level analysis, they can reason about the intended logic and data flows across an application. This allows them to identify deviations or flaws in how an application processes information, handles authentication, or manages transactions, which might lead to security vulnerabilities that don’t fit into conventional pattern-matching rules.

Conclusion

The landscape of application security is undergoing a profound transformation, driven by the rapid advancements in Artificial Intelligence. As development cycles accelerate and codebases grow in complexity, traditional security approaches alone are no longer sufficient to manage security debt and ensure the continuous delivery of secure software. AI-powered SAST tools offer a compelling solution, enabling us to detect vulnerabilities earlier with greater accuracy and to integrate security seamlessly into developer workflows.

Whether an organization opts for AI-powered enhancements to existing SAST or embraces the more AI-native engines, the benefits are clear: reduced false positives, faster remediation, and a more robust security posture. The future of application security will undoubtedly involve hybrid approaches that combine the deterministic reliability of traditional SAST with the intelligent reasoning of AI. By strategically selecting and operationalizing these expert-built tools, organizations can future-proof their application security programs, ensuring that innovation and security advance hand in hand.